(2023-Apr-10) Yes, Azure Data Factory (ADF) can be used to access and process REST API datasets by retrieving data from web-based applications.

To use ADF for this purpose, you can simply use the Web activity since the data exists in the outer world. You can configure the Web activity by providing the REST API endpoint URL and any required authentication credentials. Next, you can choose the desired HTTP method (e.g., GET, POST, PUT) to retrieve data from the API endpoint, and configure any additional options such as headers or query parameters.

Once you've completed these steps, you can process the output of your Web activity by looping through and parsing the JSON data. You can extract the required elements and save them as per your job requirements. With ADF handling all the work for you, you can sleep peacefully knowing that your data is being processed efficiently.

Image by Guilherme Reis from Pixabay

However, it's essential to keep in mind that some REST APIs may require additional configuration or authentication steps to access their data.

One common issue you may encounter while processing large datasets is API pagination. If you try to process a large dataset at once, you may receive an error message like "Operation on target failed: The length of execution output is over limit (around 4MB currently)," or your specific API may have other constraints that limit the amount of data returned in a single call. To avoid such data throttling issues, you may need to execute multiple requests to retrieve all the data page by page.

Building a custom API pagination mechanism for your Web activity data flow can be challenging.

Another challenge, or rather a best practice approach from a security perspective, is to use additional authentication when accessing the API dataset. It's unlikely that anonymous access to corporate data via REST API would be allowed unless the data is intended to be publicly accessible.

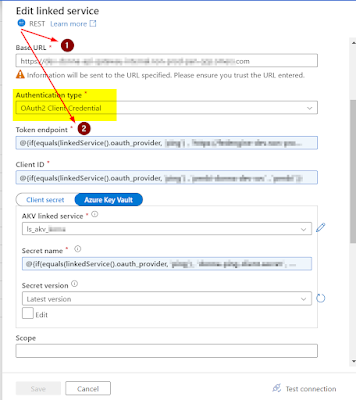

When implementing an authentication process, consider building a mechanism that supports OAuth2 authentication. Although it's still possible to implement it, it will not be an easy task.

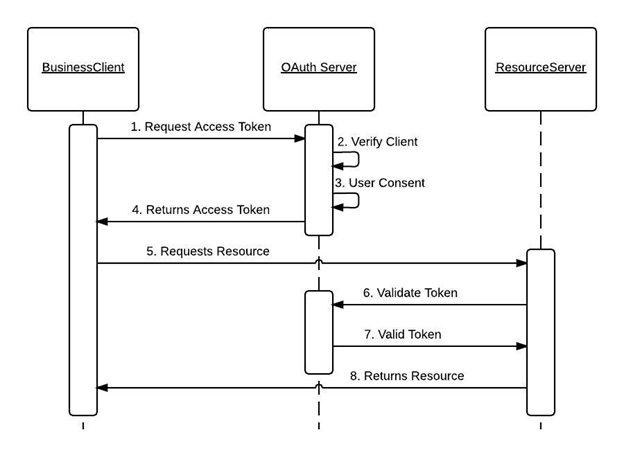

OAuth 2.0 is often used to enable one application to access another application or service without requiring user intervention, where a business client application acts as a client and calls a business service, requesting some business information and passing an access token, the client then is pre-authorized to access the resource.

Original Image: https://docs.oracle.com/cd/E82085_01/160027/JOS%20Implementation%20Guide/Output/oauth.htm

However, Azure Data Factory is not limited to the only Web activity to work with REST API datasets. Its built-in LinkedService capability to connect your REST API endpoint provides a solid foundation to do further data extraction and transformation.

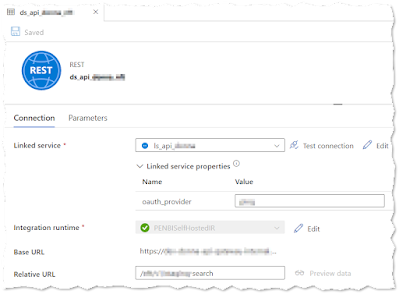

We can also have more flexibility to specify relative URLs toward your target web-based datasets:

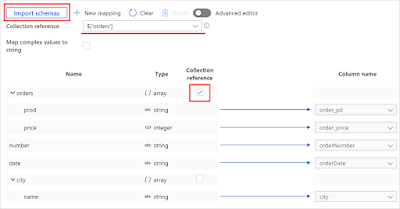

After establishing a connection to the API-based dataset, ADF provides a data preview option that is useful for identifying a suitable starting point in the JSON output collection and mapping the required data fields for subsequent data flows.

So my recommendation to myself, trust Microsoft more with their development tools offering, and spend more time reading the official documentation (and not my blog posts :- ), it is a source of trustworthy information filled with many intricate details and well-explained examples.

MSFT ADF documentation resource: https://learn.microsoft.com/en-us/azure/data-factory/connector-rest?tabs=data-factory

Comments

Post a Comment